Big data is for the processing of massive chunk of formatted and unformatted data; the purpose of such big data analysis is marketing and business analysis. There are numerous data warehousing supports available in the market and not to mention, big data support through cloud platform is the recent craze and best solution.

Among the three Cloud competitors in the market, Google is investing a lot in Cloud platform offering to gain ground. Google BigQuery is Google’s fully managed, serverless data warehouse solution that has invaded the big data analysis field currently.

Recommended Reading: Why is Big Data Analytics so important?

Google BigQuery is a highly scalable and fast data warehouse for enterprises that assist the data analysts in Big data analytics at all scales. Furthermore, Google BigQuery is a low-cost warehouse that allows data analysts to become more productive. There is no infrastructure that needs to be managed, and you can always focus on meaningful insights derived from data analysis.

In the next sections, we will discuss Google Big query as a technology with its features, analytics capabilities, and how it performs when compared with other similar technologies in the market.

Know More about Google BigQuery and Its Components

We have already mentioned that Google BigQuery is a low-cost data warehouse solution. In addition to that, Big Query allows enterprises to set up the warehouse in a few seconds, and one can query the data immediately. It uses SQL to provide JDBC and ODBC drivers for quick data integration. Also, using Google BigQuery, you can scale seamlessly with huge reach and capacity. Moreover, as a user to analyze data, you can perform parallel execution along with performance optimization.

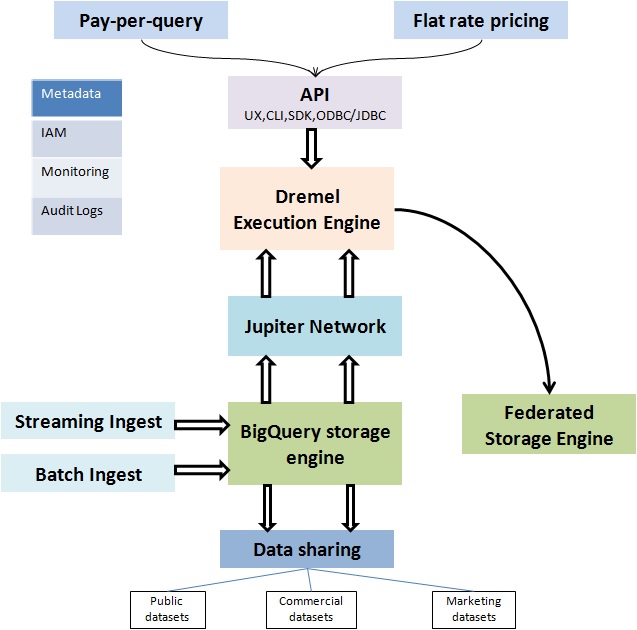

BigQuery is a sophisticated service with 12 user-facing components:

1. Opinionated Storage Engine: BigQuery has the storage engine that optimizes and evolves the storage without any disruptions.

2. Jupiter Network: It is the internal data center network that allows BigQuery to separate storage and compute.

3. Standard SQL and Dremel Execution Engine: Dremel allows smart scheduling and pipeline execution.

4. Serverless Service Model: Serverless model helps in the highest level of abstraction, automation, and manageability.

5. Enterprise-Grade Data Sharing: Sharing of Exabyte scaled datasets is possible with BigQuery due to the separation of computing and storage. You can even share data with other organizations, and you pay for the storage while they pay on per query basis.

6. Federated Query Engine: If the data is in GCS, Google Drive or Bigtable, you can query data from BigQuery with no data movement. This is called Federated Query Engine.

7. IAM, Audit Logs and Authentication: BigQuery allows organizations the high granularity control and role for users. OAuth and Service Accounts are the two modes of authentication used for access controls.

8. Datasets: It supports Public, Commercial, Marketing, and Free pricing tier.

9. UX, CLI, SDK, ODBC/JDBC, and API: It is an access pattern where everything is wrapped around REST API.

10. Pay-Per-Query AND Flat Rate Pricing: The two pricing models as per user need.

11. Streaming Ingest: Capability of processing millions of rows at a time.

12. Batch Ingest: Processing capacity of millions of data, however not as fast as streaming processing.

New in the world of Big Data. Start from the basics, read our previous blog All You Should Know About Big Data and learn Big Data.

Comprehensive List of Google BigQuery Features

There are a large number of features of Google BigQuery. Let’s have a look over the important Google BigQuery features.

- Real-Time Analytics: BigQuery provides high-speed streaming API that helps in real-time analytics.

- High Availability: It helps in high availability with replicated storage and durability to make data available in case of extreme failures.

- Standard SQL: BigQuery uses standard ANSI: 2011 compliant SQL that reduces the need for code rewrite and provides JDBC and ODBC drivers.

- Serverless BigQuery gives you the chance to focus on the analysis and data as the serverless warehouse provides you the needed resources on time.

- Locality: The user can control the data access in Google BigQuery with its strong fine-grained access management and identity control system.

- Security: Data Encryption provides maximum security for data in transit or rest. You can store the data in European or US locations with geographic access and control for data.

- Data Transfer Service: BigQuery Data Transfer Service automatically transfers the data from the external sources like Ad words, YouTube etc. as per the scheduled basis.

- Automatic Backup: The data in BigQuery is replicated automatically, and it keeps 7-day change history making it easier to restore the data.

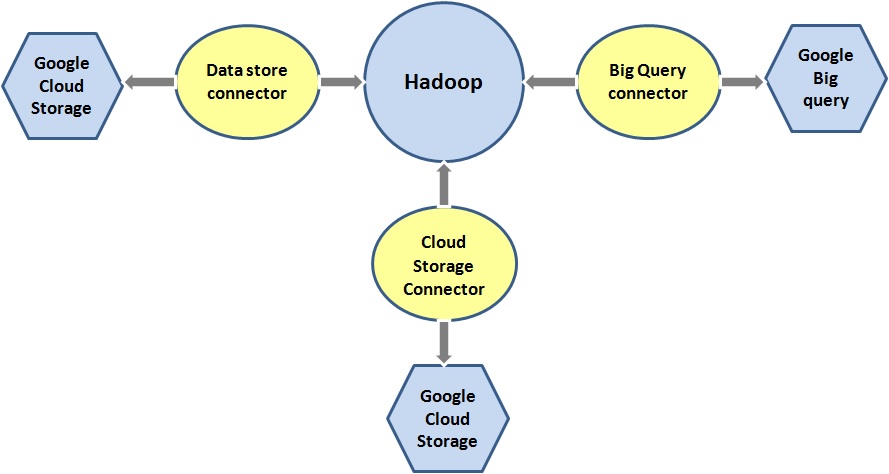

- Big Data Ecosystem Integration: BigQuery allows the integration with Apache Big Data ecosystem to enable Hadoop and Spark workloads to write or read data with the Cloud Dataflow and Cloud Dataproc.

- Cost Control: BigQuery allows you to cap the daily costs by providing the cost control mechanisms.

- Support for AI: BigQuery helps to integrate with TensorFlow and CloudML. This along with Big Query’s capability to transform and analyze data helps to build up structured data models for Machine Learning.

- Data Ingestion: BigQuery can do real-time analysis of almost thousands of rows of data per second.

- REST API Interaction: Google Big query has the programmatic support of REST API that enables programmers to code with Python, Java, C#, Node.js, Go, PHP and Ruby.

There are a number of Big Data tools and technologies to manage and analyze big data. Here is the list of Top 10 Open Source Big Data Tools.

How does Google BigQuery Google Analytics Work with Big Data Solution?

As there is no infrastructure to manage in Google Big query, it helps to focus on the analysis of data to get meaningful insights using SQL. This is the most beneficial feature for big data solution. Moreover, there is no need for a database administrator. Along with that, Google BigQuery obtains a powerful analysis ability which helps to improve the insights from data. Not to mention, to accomplish today’s Big data requirement, the need for real-time data analysis and Big Query wins the game in this regard.

Here we have highlighted some key points which will help you to understand how Google Big query can help you to analyze Big data in less time.

Columnar Storage

BigQuery offers a complete view of data with the columnar storage in Google Drive, Google Sheets, Google Cloud Storage, and Google Cloud Bigtable. Columnar storage gives a massive parallel design. Hence, it makes every query distribution across multiple servers and results in data queries return fastest results.

Query Prioritization

In general, we can list two types of queries for execution –

- Batch

- Interactive

In Google Cloud, every project is allocated with slots which are the unit of required computational capacity for executing SQL queries. Google BigQuery can execute these SQL queries by either interactive or batch query. However, by default, the Big query runs an interactive query. As per Google’s on-demand pricing model you can allocate the project up to a certain number of slots (max 2000). Now both in batch and interactive query model, there is the facility of query prioritization.

In case of the batch, queries are queued and executed once the idle resources are available. On the contrary, once Google Big query issues an interactive query, it competes for slots with all other concurrently running interactive queries based on other on-demand projects

Less Data Loading Time

BigQuery can handle any relational data model efficiently. Moreover, there is no need to change the schema during the transition from an existing data warehouse to the Big Query. In this case, most of the normalized data structures map to repeated and nested rows naturally in BigQuery. Hence, this simplifies loading data from JSON files and Avro, and thus reduces the overall data analysis time.

Certifications play an important role in one’s career. Check out the best Big Data Certifications you should go for in 2018.

Google Big Query Pricing Model

Google Bigquery pricing model has a wide variety of forms. However, the most beneficial part is it is flexible and scalable. We can categorize Google query pricing based on below criteria –

- Storage Costs

-

- Active – Monthly charge for stored data modified within 90 days

- Long-term – Monthly charge for stored data that have not been modified within 90 days. This is usually lower than the earlier one.

- Query costs

-

- On-demand – Based on data usage

- Flat rate – Fixed monthly cost, ideal for enterprise users

- Free Usage

- Initial 10 GB of storage per month

- Initial 1 TB of query data processed per month

- Free usage is available for below operations –

- Loading data (network pricing policy applicable in case of inter-region)

- Copying data

- Exporting data

- Deleting datasets

- Deleting tables, views, and partitions

- Metadata operations

- Streaming Pricing

Loading data into BigQuery is free. However, there is a small charge applicable for streamed data, and the amount varies in country regions.

- Data Manipulation Pricing (DML)

-

- This is based on the number of bytes processed by the query.

- Data Transfer Service Charges

This is a monthly prorated based charge and applicable for below applications –

- Google AdWords

- DoubleClick Campaign Manager

- DoubleClick for Publishers

- YouTube Channel and YouTube Content Owner

How does Google Big Query vs RedShift Compete with Each Other?

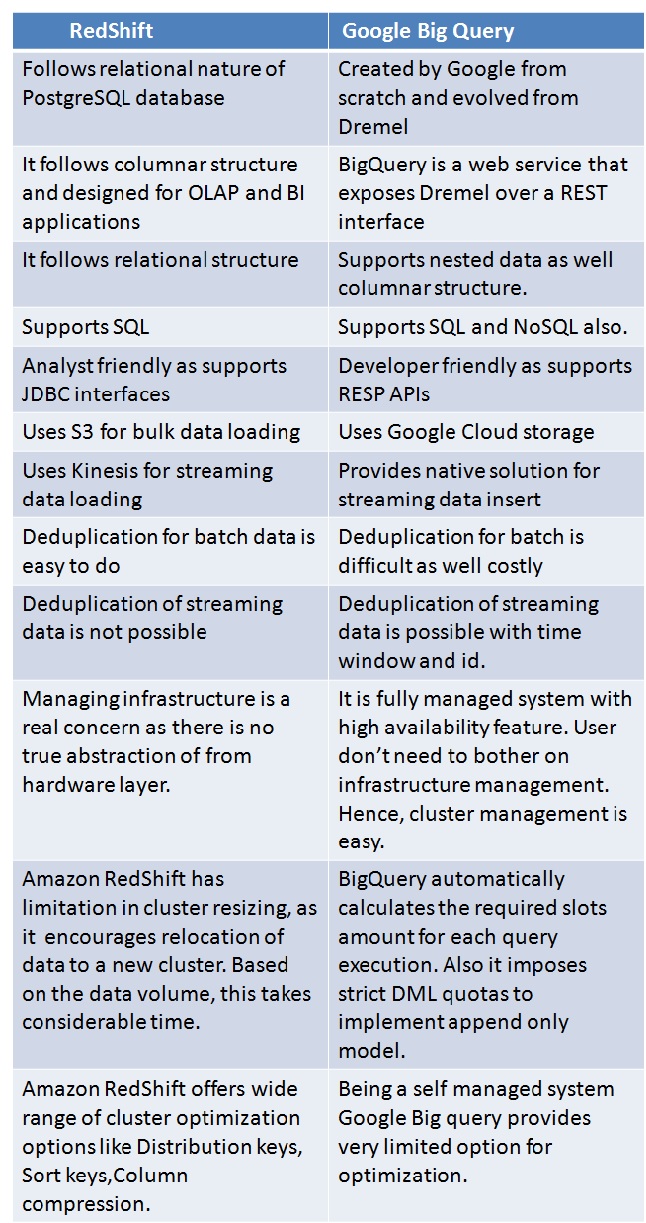

Google BigQuery and RedShift, both the technologies are the high performer in big data analytics in a cloud platform. However, they have many operational differences which make them the market competitor to each other. Primary differences between Google Big Query vs RedShift are –

Want to expand your Big Data knowledge with the latest updates? Start reading Big Data Blogs, here is the list of Best Big Data Blogs of 2018.

Google BigQuery vs RedShift: Costing

RedShift seems more expensive than Google Big Query. Where RedShift costs $0.08, per month ($1000/TB/Year), it costs $0.02 for BigQuery. However, if we go into detailed pricing structure, there are some drawbacks in Google Big query pricing model.

- The $0.02/GB cost covers only storage and not queries. Additionally, a user needs to pay separately for an amount of data processed at a $5/TB rate per query. Now unless you use data, there is no meaning of such cheap data storage.

- Google big query pricing model is unpredictable. Moreover, the monthly cost can vary on the month to month basis. Hence, you can’t estimate how much it will end up at the end of the month.

- The pricing model is complex in itself. Calculation based on each query along with per GB usage is complicated which needs a thorough analysis.

Conclusion

If you want to cope up with a new technology, you need to first grasp the basic knowledge. As we see Big query is an implementation in a perfect blend of Cloud Computing and Big data solution, hence, knowing these technologies are added advantage for anyone.

At Whizlabs, we leverage the knowledge irrespective of the professional levels. Our training courses on Cloud technology and Big Data cover most of the recognized vendor-specific certifications preparation resources. For example, in Cloud Computing stream you can select any of the certifications from AWS, Azure, Google, and Salesforce. Also, we are very soon going to launch Google Cloud Certification (GCP) guide for the courses like; Google Certified Professional Cloud Architect, Google Cloud Professional Data Engineer, and Google Cloud Security Certification. On the other hand, from the Big data stream, you can select Cloudera or Hortonworks certification training.

Whizlabs promises to provide you the best in the lot. Join us and experience the difference!

- Top 45 Fresher Java Interview Questions - March 9, 2023

- 25 Free Practice Questions – GCP Certified Professional Cloud Architect - December 3, 2021

- 30 Free Questions – Google Cloud Certified Digital Leader Certification Exam - November 24, 2021

- 4 Types of Google Cloud Support Options for You - November 23, 2021

- APACHE STORM (2.2.0) – A Complete Guide - November 22, 2021

- Data Mining Vs Big Data – Find out the Best Differences - November 18, 2021

- Understanding MapReduce in Hadoop – Know how to get started - November 15, 2021

- What is Data Visualization? - October 22, 2021