Google Cloud DataFlow is a managed service, which intends to execute a wide range of data processing patterns. It allows you to set up pipelines and monitor their execution aspects. Apart from that, Google Cloud DataFlow also intends to offer you the feasibility of transforming and analyzing data within the cloud infrastructure. The potential of this service by Google Cloud offers you the feasibility to gain actionable insights within the data by lowering the cost of operations.

Read more about Google Cloud Platform!

The hassles of deploying, maintaining, and scaling the infrastructure pipelines are handled by Google Cloud DataFlow, while you can focus upon other core business aspects. The Google Cloud DataFlow overlaps with all of the other software frameworks & services. Some of them include Amazon Kinesis, Apache Spark, Apache Storm, Facebook Flux, and others. The preview of this managed service was first witnessed in the month of June 2014 at Google I/O Developer conference.

Interested in Google Cloud Certifications? Check out whizlabs brand new online courses and practice tests here!

There is more that you should know about this 7-year-old managed service that has simplified organizational efficiency! Hence, this article intends to put up all of the core attributes of Google Cloud DataFlow for you to understand its efficacy and utilization.

Overview and Working Functionality of Google Cloud DataFlow

The cloud computing market has boomed in the past years, and migrating businesses onto the cloud architecture is important to survive! If you are not within the cloud, then you cannot adopt such hybrid solutions like Google DataFlow and others. With the right implementation of cloud architecture, you can ensure to leverage the functionality of seamless business operation.

Read more about Beginner’s Guide to Cloud Computing!

Google Cloud DataFlow is yet another popular managed service, designed by Google, for helping the companies and enterprises with assessing, enriching, and analyzing the data, in either stream mode or real-time. The enterprises can also access batch mode or historical mode for giving access to data. This service by Google has the potential to offer a reliable pathway of discovering quality and crucial information about the company.

It is more like a simple serverless approach for handling and provisioning the resources. Hence, it means that organizations can solve their data processing challenges by accessing endless capacity options. Moreover, the organizations can also be completely agile with the implementation of Google Cloud DataFlow. Most of the developers and enterprises count on Google Cloud DataFlow as an ETL tool within GCP. It destines that DataFlow intends to extract, transform and load information!

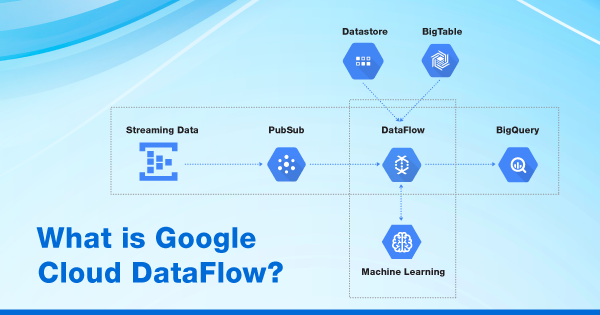

You can term it as a next-generation ETL tool that intends to put up business potential for extracting data within the databases of respective systems and then goes ahead with the transformation aspects into data without imposing any limitations. You will have the integrated potential of creating or building some important pipeline jobs to support information migration within BigQuery, BigTable, and cloud datastore (pub/sub). And with it, you can plan out on building your own warehouse of information within the Google Cloud Platform.

Read more about What is BigQuery!

Google Cloud DataFlow has a working approach, with the use of abstraction details, that decouples the implementation processes. It is done from the application codes within runtime environments and storage databases. Cloud DataFlow intends to break down data sets and real-time information in an easy and convenient manner.

DataFlow intends to run over the same fully-managed and serverless architecture or model. The idea of putting this serverless and managed model over Google Cloud DataFlow is that the developers should get more freedom to keep their ideas active and to develop innovative code. While the developers take care of the coding, the DataFlow will provision and manage the computing needs for the same.

The data scientists try to tap into a higher level of abstraction that intends to allow them to work more efficiently and productively. Cloud Dataflow model also appears within the Google open network. It has a collection of APIs and SDKs that sets a permit for developers for designing and implementing batch-focused or stream-based pipelines for data processing. Cloud DataFlow service generates a graph of execution that makes it quite evident for enterprises to execute parallel pipelines in the simplest way possible.

Along with all of the perks, Google Cloud DataFlow also intends to put up horizontal auto-scaling potential upon worker resources for maximizing the resource utilization aspects. Hence, this service also optimizes some of the processing tasks by taking concern upon reducing the multiple tasks into a single execution pass. And the big thing about Google Cloud DataFlow is that you need to add SQL queries with the integration of the cloud-based analytics service, BigQuery.

If you are already making use of Google BigQuery, then DataFlow will process to clean, prepare & filter the data before it writes or uploads to the BigQuery instances. Moreover. DataFlow also serves the functionality of reading the data from BigQuery, in case you want to join that data with some other sources!

Google Cloud DataFlow is highly multifunctional, as it possesses ETL, real-time streaming, and batch processing as its rich capabilities. It rectified all of the performance hassles of Map Reduce for building the dedicated pipelines. Google was the father of MapReduce, and since then, its functionality has become a core component for Hadoop. Now, DataFlow has replaced MapReduce at Google for building pipelines. After the performance decline of MapReduce upon handling the multi-petabyte datasets, Cloud DataFlow was then integrated to offer better performance upon managing high-end datasets.

Google Cloud DataFlow Pricing

The pricing for Google Cloud DataFlow is usually based upon hour. And the service usage for DataFlow undergoes billing in ‘per second’ increments or is based upon the job. The usage of DataFlow is usually stated in hours! It means that if the usage is 30 minutes, then the bill will show it as 0.5 hours! Hence, this is done to apply the hourly pricing structure to per-second-use.

The jobs and workers tend to consume resources in diverse ways. The DataFlow workers will be consuming the resources such as vCPU, GPU, Storage, and Memory, each of which is billed on a per-second basis. The streaming and batch workers are some special resources that make use of compute engines. A DataFlow job will not be able to emit the compute engine billing for the respective resources that are managed by Google DataFlow service.

Google Cloud Certified Professional Cloud Architect Certification is now easy with Whizlabs. Check out our practice tests and online course here!

The pricing details for the usage of Google Cloud DataFlow also depend upon the DataFlow worker type, vCPU, memory, and data processes. Hence, the pricing is as follows:

| DataFlow Worker Type | vCPU (per hour) | Data Processed (per GB) | Memory (per GB per Hour) |

| Batch | $0.056 | $0.011 | $0.003557 |

| FlexRS | $0.0336 | $0.011 | $0.0021342 |

| Streaming | $0.069 | $0.018 | $0.003557 |

In case you wish to know more details about the pricing of other resources, then you can check this official documentation by Google.

Use Cases of Google Cloud DataFlow

Google Cloud DataFlow is just another inclusion within the family of managed and serverless services of Google Cloud. The design of DataFlow intends to help you run your enterprises in a convenient manner by embedding the digital transformation aspects. The DataFlow system has the potential to partner even with 3rd party developers or partners to ensure seamlessness upon the data processing tasks. For added knowledge, you must know that Google Cloud DataFlow has the ability to integrate with Cloudera, ClearStory, Salesforce, and others. Here are some of the use cases of Google Cloud DataFlow that will help you get better clarity upon its integration.

1. Stream Analytics

The stream analytics service by Google has the potential to help you organize the data more proficiently. Not just that, but it also makes the data more accessible and useful, right from the moment it is generated! Stream Analytics is built over the Cloud DataFlow, along with BigQuery and Pub/Sub, to put up the streaming solution. This provisions the resources that are about to undergo ingestion, processing, and analyzing sessions for the fluctuating volumes of data for the insights in real-time.

The abstracted form of provisioning simplifies the complexities and makes it possible for stream analytics to be accessible by data engineers as well as analysts.

2. Real-Time Artificial Intelligence

Google Cloud DataFlow adds streaming events onto the TFX and Vertex AI sections of Google Cloud. It is to ensure that they have the potential to help enable the form of predictive analytics, real-time personalization, and fraud detection. There are several sub-use cases associated with real-time AI implementation. With this use case, Google Cloud DataFlow can help implement anomaly detection, pattern recognition, and predictive forecasting.

TFX makes use of Apache Beam and DataFlow altogether, in the form of a distributed data processing engine, for enabling ML lifecycle aspects. All of these aspects will get their support through the Kubeflow pipelines, with the integration of CI/CD for ML.

Read more on What is Cloud Run?

3. Log & Sensor Data Processing

With Google Cloud DataFlow, you get the potential of unlocking the business insights from a global device network with the intelligent IoT platform. The managed integration and scalable nature will be helping you with connecting, storing, and analyzing data at the edge and within the Google Cloud. To know more about IoT and its implementation with DataFlow, refer to this documentation by Google.

The Stand Out Salient Features of Google Cloud DataFlow

The Google Cloud Dataflow is a tool that allows you to create data channels that can be monitored and used to transform and analyze data. The tool is a feature-packed one that offers many benefits to the professionals who use it. It is serverless, fast, and effective, characteristics that make it one of the best tools for data management and processing. Here are the key features of the Google Cloud Dataflow platform that extend many benefits to its users.

-

Auto-scaling infrastructure and dynamic work rebalancing

The Google Cloud Dataflow offers features like auto-scaling of resources. The auto-scaling feature minimizes pipeline latency in the system. On the other hand, it increases the efficiency of resource utilization. In other words, it maximizes the resource utilization potential of a system.

The Google Cloud Dataflow offers data-powered resource auto-scaling, which optimizes the data processing system. Therefore, it reduces the cost per data record and improves the overall efficiency of the system. The data inputs put into the system go through an automated process of separating and processing.

The automation of the data input process improves worker resource utilization in the overall algorithm. Moreover, this feature of the Google Cloud Dataflow diminishes the impact of hotkeys on the data channel performance. Thus, it helps in creating faster and better data processing systems.

-

Flexible Scheduling and Pricing

For jobs such as overnight jobs, flexibility is a huge necessity. To offer flexibility with processing in job scheduling time, the Google Cloud Dataflow has a flexible resource scheduling feature. This flexible feature offers low pricing on batch processing. These flexible jobs are filed into an organizational system that enables their retrieval within a six-hour time frame.

-

Real-time AI Patterns

The Google Cloud Dataflow has ready use pattern-enabled real-time AI capabilities. These AI capabilities allow the system to interact with near-human intelligence to create reactions to large-scale events in a data flow. The users can use these real-time AI capabilities of the Google Cloud Dataflow to create solutions in predictive analytics for improving different industrial systems.

The AI capabilities are also used to create other advanced analytics utilities like anomaly detection programs. The capabilities of Google Cloud Dataflow can also create real-time personalization of systems and services. Now that AI is coming to the forefront of industrial and general applications, the capabilities of Google Cloud Dataflow allow a user to experiment with new utilities and experimentation of AI applications.

-

Management, Monitoring, and Identification of Data Pipeline Problems

The Google Cloud Dataflow offers SLO-based data pipeline management. These service level objective parameters help in the determination of any performance and availability problems in the pipeline. The visualization capabilities of the Dataflow tool helps in inspecting the job graph and identify bottlenecks in performance. Once these bottlenecks are identified, the user can work on them for the resolution of the issue. The system is a smart AI capable one that offers recommendations on fine-tuning identified problems so that overall performance is boosted.

The entire suite of Google Cloud Dataflow is a multifunctional, Big Data and AI-enabled program that provides actionable insights to the user at low cost using the auto-scaled infrastructure.

- Cloud DNS – A Complete Guide - December 15, 2021

- Google Compute Engine: Features and Advantages - December 14, 2021

- What is Cloud Run? - December 13, 2021

- What is Cloud Load Balancing? A Complete Guide - December 9, 2021

- What is a BigTable? - December 8, 2021

- Docker Image creation – Everything You Should Know! - November 25, 2021

- What is BigQuery? - November 19, 2021

- Docker Architecture in Detail - October 6, 2021