The purpose of cloud load balancing is to distribute the traffic across the instances of your organizational applications. When you can successfully spread or distribute the load, then the risks associated with performance hassles will gradually reduce. With the use of Cloud Load Balancer, it is evident that you will have the potential to serve content to users over the system at a faster pace. With optimal balancing, you can speed up the response to 1 million queries/second. Google Cloud Load Balancing is a fully distributed and software-defined managed service. There is no hardware involved in its implementation!

You do not have to adapt to any management aspects for an infrastructure to carry out physical load balancing. Everything is within the cloud and is easily accessible! As the IT industry is booming on a large scale, cloud computing is also becoming an integral implementation. And due to that, a large amount of data is interchanged & generated over several networks for promoting better capitalization to the organization. Google Cloud is therefore offering its load balancing service as an important and crucial part of cloud computing. It helps maintain the balance over workload that is imposed upon the organizational applications.

Planning to take Google Cloud Certifications? Check out Whizlabs brand new online courses, practice tests and free tests here!

There is more to the use of Cloud Load Balancing that you must understand for helping your organization thrive! Therefore, this is going to be the complete guide for you to understand and implement Cloud Load Balancing.

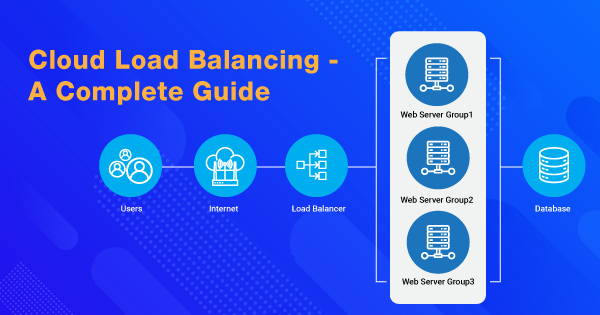

Working Overview of Cloud Load Balancing

There is no necessity of setting up any pre-warming implementation. When you turn up to take on the Cloud Load Balancing, you will distribute the load-balanced resources within either single or multiple regions to your users, as close as possible! Hence, it will determine your intention to meet the requirements for high availability.

Cloud Load Balancing can put up resources behind any particular anycast IP to scale up the resources, either high or low, to meet intelligent autoscaling. Load Balancing comes up with several flavors and has internal integration with Cloud CDN. Hence, this integration is to help promote optimal content delivery and application of Load Balancing. If you intend to know more about Cloud CDN, then you can refer to the link!

Anycast is a routing and networking methodology that is used for routing datagrams for one sender to the nearest topological node within the group of receivers. All the potential receivers are identified based upon a similar destination IP address. Google makes the announcement of IPs through the Border Gateway Protocol (BGP) from several points in the network.

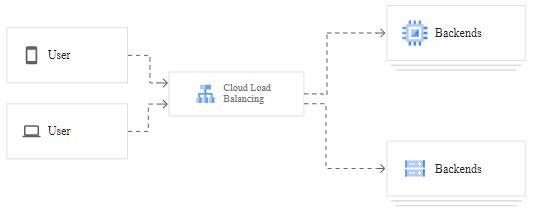

With the use of Cloud Load Balancing, any single anycast IP has the potential to front-end all of the backend instances within the regions across the globe. Hence, it also offers cross-region load balancing, which includes automatic multi-region failover attributes. Under it, you can expect a gentle movement of traffic in small fractions if the backend is not ready or is unhealthy.

The global load balancing based upon DNS gives insight that cloud load balancing has the ideology and features to come up with instantaneous reactions upon the change in traffic, backend health, network, users, and other such conditions. Cloud load balancing is nowhere an instance or a device-oriented solution.

It means that you won’t be locked out due to any physical breakdown. This load balancing technique by Google is applicable to all types of traffic that includes TCP/SSL, HTTP(S) & UDP. Not just that, but cloud load balancing will also help you terminate the SSL traffic with the use of SSL proxy and HTTPS load balancing.

As this service is built over the front-end serving platform, the response time is fast and ensures high performance. Google is itself built over the same serving platform as that of its cloud load balancing services. With ideal use, you can ensure low latency upon execution as well. The traffic to your organizational application passes through over 80 different load balancing locations on a global scale. Thus, it maximizes the distance that it travels over the fast private network of Google.

Read more about Introduction To Google Cloud Platform!

Auto-scaling feature of cloud load balancing helps scale up the balancing aspects when the traffic and users for your application grows. And, it also scales down when your traffic or user count reduces. Thus, you get good control over the management of unexpected, instant, or huge traffic hikes. The cloud load balancing aspects direct the traffic surge to different regions of the world that are available at the moment for taking the traffic.

Pricing of Google Cloud Load Balancing

There is a standard pricing structure embedded over Google for all types of Cloud Load Balancing services. All types, except the Internal HTTP(S) Load Balancing services. As per the load balancing and forwarding pricing structure by Google, you have to pay:

- For the first 5 forwarding rules, you have to pay $0.025 per hour.

- For every additional forwarding rule, after the first 5, you will have to pay $0.010 per hour.

- The ingress data that is being processed by the load balancer will charge you $0.008 per GB.

If you wish to estimate the load balancing charges, then you can head out to use the pricing calculator for the same. Here are the steps on how you can do it:

- Go to the pricing calculator.

- Select the Cloud Load Balancing tab.

- Choose a region from the drop-down menu.

- Now, enter the estimated amount of forwarding rules that you want to impose.

- Now, enter the network traffic estimate for every month.

- And you will get an estimated cost for each month!

In case of Internal HTTP(S) load balancing, you need to pay $0.025/hour per proxy instance. And, you have to pay $0.008 per GB for the data processed by the load balancer. To know more about it, you can check out this link!

Types of Cloud Load Balancers

If you intend to choose your load balancers, then at first, you need to decide upon what kind of load balancing you need. You need to give it a thought to ensure picking up either global/regional load balancing or external/internal load balancing. You need to consider the type of traffic that you intend to serve, such as HTTP, HTTPS, TCP, UDP, SSL, or others.

Global GCP load balancing is all about backend endpoints living within multiple regions, whereas regional load balancing is about the backend endpoints in a single region. With global load balancing, the users will demand access to the content and applications. Therefore, when you want to offer access to the users for the same, you can do it with the use of a single anycast IP address. Global load balancing type offers IPv6 termination. The regional load balancing demands you only for the IPv4 termination!

As there are quite a few load balancing options now, you need to pick the right load balancer to infuse it onto your cloud architecture. For distributing the traffic that is coming from the internet to Google Cloud network; you must use external load balancers. But if you wish to distribute the traffic within the Google Cloud Platform (GCP) network, then internal load balancers are the ones you should pick.

1. External Load Balancing/Balancers

If you intend to use external load balancers with global load balancing type, then you must take up the premium tier services of the Network Service Tiers. But, if you are opting for regional load balancing, then you can prefer using the Standard tier. There are four options for you within the type of external load balancers that includes:

- HTTP(S) load balancing for the respective traffic.

- TCP proxy for the TCP traffic type. It is for the ports except 8080 and 80, without any SSL offload.

- SSL proxy for the SSL offloads over the ports, except 8080 and 80.

- Network load balancer for the UDP/TCP traffic.

2. Internal Load Balancing/Balancers

Just like the network load balancer and HTTP(S) load balancer, internal load balancer is not any hardware device, appliance, or instance. It has the potential to support several connections every second, as per the need.

The use of load balancing techniques based upon the type of traffic is what helps you choose the proficient options amongst all:

- For HTTP(S) traffic, you can prefer to use Internal or External HTTP(S) load balancing.

- For TCP traffic, you can prefer to use TCP proxy load balancing, Internal UDP/TCP load balancing, and network load balancing.

- For the UDP traffic, you can prefer to use Internal UDP/TCP load balancing and network load balancing.

- For ESP/ICMP traffic, you can prefer to use Network Load Pricing.

| Load balancer type | Backend region and network | Multi-NIC notes |

| Internal TCP/UDP Load Balancing | All backends must be in the same VPC network and the same region as the backend service. The backend service must also be in the same region and VPC network as the forwarding rule. | By using multiple load balancers, it can load balance to multiple NICs on the same backend. |

| Internal HTTP(S) Load Balancing | All backends must be in the same VPC network and the same region as the backend service. The backend service must also be in the same region and VPC network as the forwarding rule. | The backend VM’s nic0 must be in the same network and region used by the forwarding rule. |

| HTTP(S) Load Balancing, SSL Proxy Load Balancing, TCP Proxy Load Balancing | In Premium Tier: Backends can be in any region and any VPC network.

In Standard Tier: Backends must be in the same region as the forwarding rule, but can be in any VPC network. |

The load balancer only sends traffic to the first network interface (nic0), whichever VPC network that nic0 is in. |

What is Internal HTTP(S) Load Balancing?

The Internal load balancing aspects upon HTTP(S) of Google Cloud is based upon proxy and is a regional layer 7 load balancer that has the potential to run & scale the organizational service with the use of just an internal IP address. This type of load balancing distributes the HTTPS and HTTP traffic to the backends that are hosted over the Kubernetes Engine and compute engine. Moreover, this load balancer will be accessible only over the chosen region within your VPC network over the internal IP address.

Internal HTTP(S) load balancing is also the managed service that depends upon the open-source Envoy proxy. And with it, you can ensure high-end traffic control potential, depending upon the HTTP(S) parameters. After you have completed the configurations for your load balancer, you will get an automatic allocation of Envoy proxies to meet the demands of traffic.

Internal HTTP(S) load balancers consist of an internal IP address upon which the clients send the traffic. There are then single or multiple backend services to which the traffic is forwarded by the load balancer. There are certain limitations upon Internal HTTP(S) load balancing option that you can check over this section.

What is External HTTP(S) Load Balancing?

The external HTTP(S) load balancing is implemented upon the Google Front Ends (GFEs). These front-ends are globally distributed and are intended to operate together, with the use of a control plane and global network of Google. You can access multi-region load balancing with this option, but you will need a premium tier service for that.

With multi-region load balancing, it will be easy for you to direct your traffic to the nearest and proficient backend endpoint that holds the capacity to withstand it. Moreover, it will also help to terminate the HTTP(S) traffic to the maximum possible extent to your users. If you opt for the standard tier, then it is all within one region!

The external forwarding rule of this load balancer specifies the external IP address, global target proxy for HTTP(S), and the ports. The clients then make use of that IP address & port to connect with the load balancer. The target HTTP(S) proxy gets the request of a client, and then it evaluates the same with the use of a URL map to decide upon the traffic routing. The proxy also has the potential to authenticate the communications by implementing the use of SSL Certificates.

Bottom Line

The list of capabilities embedded within the Cloud Load Balancing attributes of Google is endless. You will get to understand the core outcomes of load balancing when you practically implement it upon your organizational applications. It supports cloud logging, which keeps a log of all the balancing requests that are sent to your select load balancers. You can make use of it to analyze your traffic.

Google makes sure that there are health checks for the backends, and the load balancing requests are passed onto the healthy ones only. This is the service effectiveness that Google intends to offer at all times.

Assess your understanding of Cloud Load Balancing- Click Here

No Credit Card Required

- Cloud DNS – A Complete Guide - December 15, 2021

- Google Compute Engine: Features and Advantages - December 14, 2021

- What is Cloud Run? - December 13, 2021

- What is Cloud Load Balancing? A Complete Guide - December 9, 2021

- What is a BigTable? - December 8, 2021

- Docker Image creation – Everything You Should Know! - November 25, 2021

- What is BigQuery? - November 19, 2021

- Docker Architecture in Detail - October 6, 2021