In this blog post, we are explaining in details about the Kubernetes Architecture. Unlike past solutions, which were meant to do one thing well, Kubernetes was built from the ground up with comprehensibility in mind. Kubernetes is the preferred option for container orchestration as:

- It is a platform for automating deployment, scaling, and management of containerized applications across multiple hosts.

- It helps in automating work that would have had to be taken care of manually in the past such as the utilization and management of computing, network, and storage resources.

- It has various options to support customization in a workflow and higher-level automation.

Kubernetes Architecture

The kubernetes architecture provides it with the ability to combine many helpful tools into one easy-to-use solution. It’s great for those who want something that can

- orchestrate containers

- has self-healing capabilities

- load balance the traffic

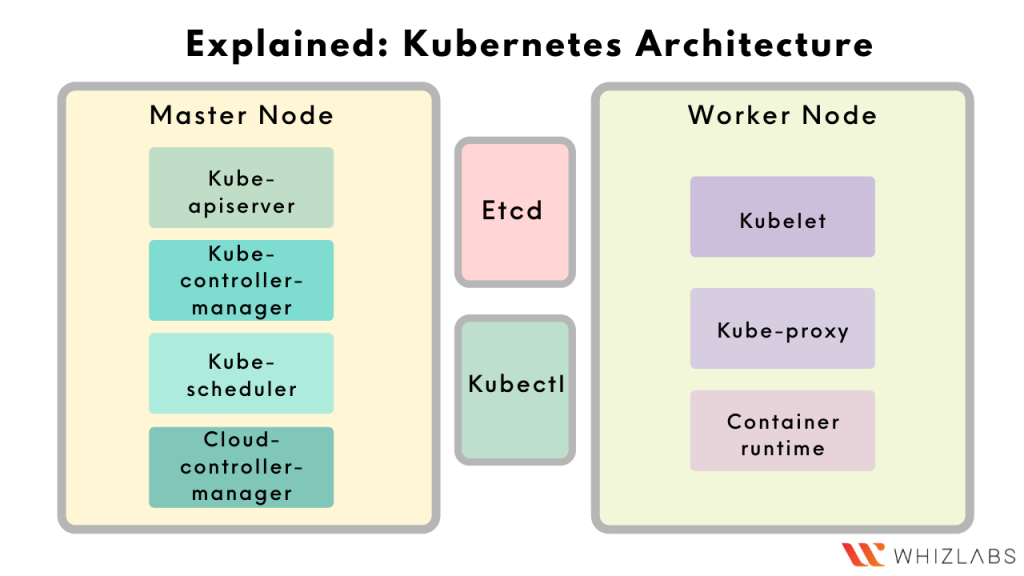

The below diagram provides a high-level overview of the kubernetes architecture.

At a higher level, Kubernetes follows a client-server architecture.

It consists of the following major components –

- master node

- etcd

- worker nodes

Master node

It maintains the integrity of the cluster and its vital components, keeps a check on how the components are interacting with one another, and works to make the actual state of system objects match the desired state.

Etcd

It is a simple, distributed key-value storage system for keeping a record and consistency of the cluster states. It is used to store the cluster data such as

- number of pods

- deployment states

- namespaces

- service discovery details.

Worker node

The worker nodes are the component that actually runs the containers, they are managed by the master node.

Each of these components (except Etcd) has in turn different parts which serve a unique purpose. To understand the purposes, it will be helpful to understand the different parts of these components.

Master node components

Kube-apiserver

- The kube-apiserver provides a key gateway to the cluster. The user can use it to interact with the cluster and perform actions such as creation, deletion, scaling, updation on different types of objects.

- Clients such as kubectl authenticate with the cluster via the kube-apiserver and also use it as a proxy/tunnel to nodes, pods, and services.

- This is the only component that communicates with the etcd cluster, making sure data is stored in etcd.

Kube-controller-manager

In order to understand this component, it is helpful to first gain an understanding of controllers.

You see, most resources contain metadata, such as labels and annotations, desired state i.e. specified state, and observed state i.e. current state. A controller in Kubernetes works to drive the actual state of an object toward its desired state.

For example, the replication controller controls a number of replicas in a pod, endpoints controller populates endpoint objects like services and pods, and others.

The kube-controller-manager is a collection of such controller processes that are executed in the background to run the core control loops, watch the state of the cluster, and make changes to drive status toward the desired state.

Kube-scheduler

It is responsible for scheduling containers across nodes in the cluster. It reads the service’s operational requirements and schedules it on the best fit node after taking various constraints into accounts such as resource limitations or guarantees, and affinity and anti-affinity specifications.

Cloud-controller-manager

This component integrates into each public cloud for optimal support of availability zones, VM instances, storage services, and network services for DNS, routing, and load balancing.

For example, when a controller needs to check if a node was terminated or set up routers, load balancers, or volumes in the cloud infrastructure, all that is handled by the cloud-controller-manager.

Worker node components

Kubelet

The Kubelet is the primary and most important controller in Kubernetes.

– It is the part that enforces the desired state of a resource, ensuring that pods and their containers are running in the desired state.

– It’s responsible for sending the health report of the worker node where it is running to the master node.

Kube-proxy

A proxy service that runs on each worker node to forward individual requests destined for specific pods/containers across various isolated networks in a cluster.

These were components related to the Kubernetes cluster. In practice, there is one more component that is used. It’s the ‘Kubectl’.

Container runtime

It’s is a software component that runs the containers on the worker nodes. Common examples of container runtimes are runC, containerd, Docker, and Windows Containers.

Kubectl

It is a command-line utility that helps in easy interaction with the kube-apiserver. It lets us issue simple human-friendly commands to perform actions via the kube-apiserver.

Some example commands of it are

- To create a new pod running nginx: ‘kubectl run nginx –image=nginx’

- To create a new deployment: ‘kubectl create deployment nginx –image=nginx –replicas=2’

So, these were the different components of Kubernetes.

Now, In order to gain a better understanding of how the different components interact with each other – let’s see the different steps involved in performing an action on the cluster.

Steps for Creation of a new pod in a Kubernetes cluster

Step 1: User request processing

- The user issues a command requesting the creation of a new pod via kubectl.

- From the kubectl CLI utility, the request reaches the kube-apiserver which validates the user request.

- Once the validation is successful, the kube-apiserver creates a new key-value record for this new pod inside etcd.

Step 2: Worker node scheduling process

- The kube-scheduler continuously interacts with the kube-apiserver and gets to know about the requirement of a new pod.

- The kube-scheduler measures all the parameters such as resource requirements and affinity rules to determine a worker node where the new pod can be scheduled.

- The kube-apiserver interacts with the kubelet of the chosen worker node and passes necessary information such as image name, env vars of the pod to the kubelet.

- After the information has been passed to the kubelet, the worker node information is passed to etcd as well by the kube-apiserver.

Step3: Pod status

- The pod’s present status is continuously communicated with the kube-apiserver by the kubelet.

- The kube-apiserver passes on the state of the new pod to the etcd key-value store.

- Once the pod starts running, the same is communicated to the user via the kube-apiserver after the state is updated on the etcd.

Summary

That’s all there is to know about the architecture of a Kubernetes cluster. Keep learning & keep practicing! if you have any questions about Kubernetes Architecture, please contact us for more details. We will respond to your questions. Also you can learn more about this topic in our kubernetes certification course.

- NGINX Tutorial for Beginners (NEW) – Learn for FREE ! - February 21, 2022

- The Beginner’s Guide to Helm Charts - February 16, 2022

- The Complete Guide to Kubernetes Logging - January 14, 2022

- Explained: Kubernetes Architecture - January 12, 2022

- Top 15 Important Kubectl Imperative Commands in Kubernetes [CHEAT SHEET] - January 12, 2022

- What is Prometheus Grafana Stack ? - December 28, 2021

- How to prepare for the Certified Kubernetes Application Developer (CKAD) Exam? - December 6, 2021