Claude Certified Architect: Agentic AI Era Begins

Anthropic didn’t just launch a certification. It quietly signalled where enterprise AI is heading next.

On the surface, Claude Certified Architect – Foundations (CCA-F) looks like another technical credential in the increasingly crowded AI learning market.

Another exam. Another badge. Another signal for LinkedIn.

But that reading misses a bigger shift. This launch isn’t really about test-taking. It’s about what the AI industry is beginning to treat as a real, deployable skill.

For the last two years, the market rewarded a familiar kind of AI fluency: prompt experimentation, tool familiarity, model comparisons, and surface-level implementation. That phase was useful. It lowered the barrier to entry and brought more practitioners into the ecosystem. But it is no longer enough.

Enterprise AI is moving past experimentation.

As enterprise AI adoption deepens, organisations will increasingly need practitioners who can design agentic systems, manage workflow reliability, integrate tools and context, and turn large language models into business infrastructure.

The launch of Claude Certified Architect marks a shift from AI familiarity to AI execution. And more importantly, it reflects the rise of agentic AI as a deployable business capability, not just a product demo.

What does the Claude Certified Architect – Foundations Exam actually cover?

Anthropic has launched the Claude Certified Architect – Foundations (CCA-F) as a new technical certification. It focuses on validating hands-on expertise in building with Claude. Anthropic positions it as an associate-level certification for technical practitioners at partner companies who already have foundational knowledge and are ready to demonstrate deeper implementation capability.

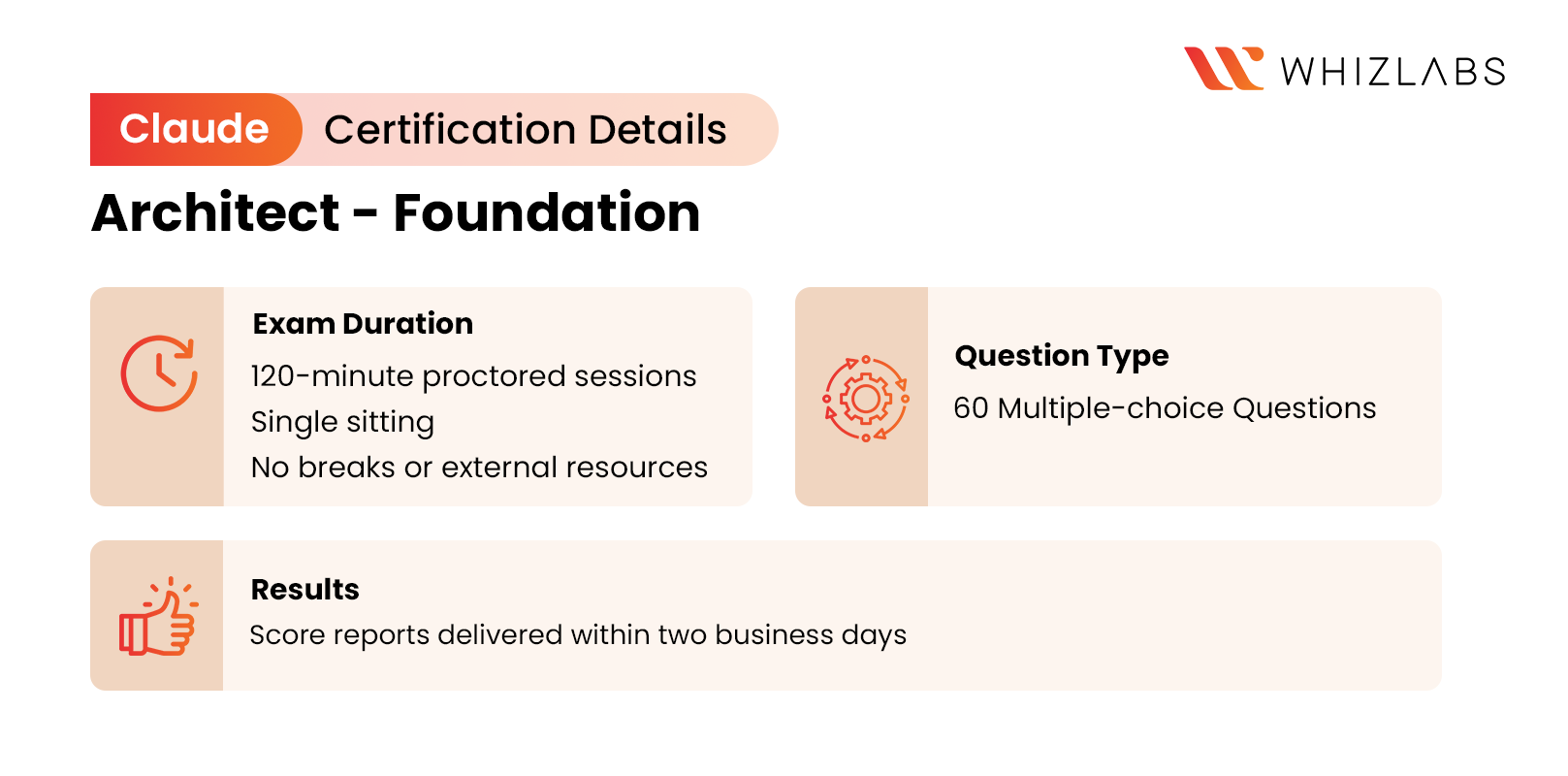

The Claude Certified Architect – Foundations (CCA-F) Exam Format

- Agentic architecture and orchestration (27%)

- Claude Code configuration and workflows (20%)

- Prompt engineering and structured output (20%)

- Tool design and MCP integration (18%)

- Context management and reliability (15%)

Anthropic is not prioritising “how well you can use Claude” in a generic sense. It’s testing whether you understand “how to operationalise Claude inside real systems.”

In other words, the exam reflects a bigger industry transition: from “Can you use an AI model?” to “Can you design and operationalise an AI system?”

The exam also leans heavily into scenario-based evaluation, with candidates tested for simulated enterprise-style deployments such as:

- Customer support automation agents

- Multi-agent research systems

- CI/CD integrations using Claude Code

- Structured data extraction workflows

The Anthropic is trying to assess not just conceptual knowledge, but implementation judgment under realistic conditions.

Note: The certification is currently available only to employees within the Claude Partner Network, and the first 5,000 partner-company users will receive free vouchers; post that, the exam will cost $99 per attempt.

The pricing and rollout strategy matter more than they seem. Because this is not just a learning initiative. It’s an enablement strategy.

The Claude Certified Architect – Foundations launch goes beyond a technical exam.

The Launch of Claude Certified Architect – Foundation by Anthropic is beyond technical exams. It signals how the market for AI skills is maturing and evolving.

For a while, broad AI awareness was enough to stay ahead of the curve. That is no longer the case. The market is beginning to reward a different layer of capability:

- Implementation patterns

- Orchestration logic

- System reliability

- Context handling

- Deployable agent workflows

This is more than a better chatbot wrapped in a new label. This introduces learners to a very different technical and operational burden. Once AI systems begin coordinating tools, managing state, decomposing tasks, and executing multi-step workflows, the required skill set moves much closer to systems architecture than prompt experimentation.

“This certification is all about it: Anthropic signals a shift from AI familiarity to AI execution.”

Once enterprise adoption becomes the priority, model quality alone stops being enough. Companies need a way to create trusted implementation layers around their tools. Which means,

- Trained practitioners

- Repeatable deployment patterns

- Ecosystem-standard workflows

- Externally visible proof of technical capability

This gives the direction that any company that is serious about AI will eventually move toward.

The Rise of the Agentic AI Skill Stack

This is where the shift gets interesting. Anthropic is effectively certifying a layer of AI work that much of the market still underestimates.

With Agentic AI, it changes what skill actually means. The moment AI systems are introduced into planning tasks, using tools, managing context, and coordinating across multiple steps, the nature of the work changes.

You are no longer just interacting with a model. You are designing behaviour, constraints, and execution logic inside a system that now has some degree of autonomy.

This strategic approach requires a very different skill stack beyond prompting, but also.

- Multi-agent orchestration

- Coordinator-subagent patterns

- Task decomposition

- Session state management

- Tool calling

- Context reliability

- Workflow enforcement

- Structured outputs

- MCP integration

This is where AI work starts looking less like experimentation and more like systems architecture. This is a huge shift; however, it’s overdue.

Yet the so-called “AI-skilled professionals” conversations are still stuck at model literacy. Organisations are expecting talents who can understand and build models that are reliable, observable, maintainable, and actually useful in business environments.

This certification reassures the shift to the next serious layer of AI expertise and how to structure the system around it.

Anthropic Just Did What Every Major AI Company Will Eventually Have to Do

This is where the launch becomes strategically important. If you zoom out, Anthropic is solving a problem that every serious AI platform company is eventually going to run into.

Despite having a strong model, benchmarks, and even having enterprise demand, it does not scale enterprise adoption on its own. It requires a credible implementation layer around the product, which means,

- Trained implementation partners

- Validated technical talent

- Repeatable deployment patterns

- Ecosystem trust

And that is exactly what Anthropic is building. This is not just about giving individuals a way to prove their skills. It is about scaling partner readiness, enterprise confidence, deployment standardisation, and go-to-market maturity.

With this, Anthropic is making Claude easier to trust, easier to deploy, and easier to scale through people who know how to implement it properly.

And their rollout strategy makes that even clearer. The first 5,000 vouchers being offered free to partner-company users is not just a nice incentive, community support or generosity, but ecosystem acceleration.

The faster its partner ecosystem gets trained, the faster enterprises can deploy Claude with confidence. And once certifications start appearing on LinkedIn, partner websites, proposals, and consulting profiles, the credential itself starts functioning as a market signal.

And it is a smart move. This is exactly how cloud, cybersecurity, and platform ecosystems mature.

Why Does This Matter for AI Engineers and Architects?

If you are an AI engineer, solutions architect, platform engineer, technical consultant, developer advocate, or someone building internal AI systems inside a product team, this matters more than it may seem.

Knowing AI, writing prompts, tools, testing, and building cool prototypes are no longer the differentiators. Instead,

- Can you design systems, not just prompts?

- Can you build workflows, not just prototypes?

- Can you manage context, tools, and reliability in production?

- Can you think in orchestration, not just output?

This is the market shift that is going to catch a lot of people off guard.

And if you’re an engineer or architect, this should feel familiar. Because this is exactly what happened in the cloud.

At first, knowing the services was enough. Then, knowing how to build resilient systems became the actual skill. Eventually, AI is also heading the same way.

Reality Check: Certifications Still Don’t Replace Real Experience

A certification alone does not make someone an agentic AI architect.

No exam, no matter how well-designed, can fully replicate what it takes to build AI systems in production. But real capability still comes from things.

- Hands-on building

- Debugging

- Deployment failures

- System trade-offs

- Production pressure

In reality, you understand context management after watching a system fail because the wrong context was carried forward. You understand orchestration after a workflow breaks under complexity, and you have to redesign it.

Likewise, the industry should absolutely be careful not to confuse credentialing with capability. A well-designed certification can still be valuable as it validates the right things:

- Applied judgment

- Architectural thinking

- Scenario-based implementation skill

- Production-oriented decision-making

And to Anthropic’s credit, Claude Certified Architect appears to be moving in that direction. That’s a much better signal than just testing terminology or feature awareness.

The Start of a New AI Credential Economy

The Claude certified Architect may look like a small launch, a niche certification, a partner-only rollout, already noisy in the AI market. But in actual terms, it signals much bigger.

- AI skills are maturing.

- Agentic workflows are becoming enterprise infrastructure

- and the market is beginning to formalise what “real AI expertise” actually looks like

Until now, AI credibility has mostly lived in demos, side projects, GitHub repos, tool familiarity, and whoever sounded most fluent on the timeline. That phase was always temporary.

As enterprise adoption stretches, the market will increasingly demand clearer signals of implementation capability, over enthusiasm. Which is the boom of a new phase: Credential economy of applied AI systems.

The AI industry is moving beyond rewarding people who can experiment with models. It has now started to reward those who can operationalise them. And that shift has a second-order implication that often gets overlooked.

Going forward, certifications will evolve to test hands-on implementations over badges. This transition is better supported with practice. And we at Whizlabs are on a mission to empower professionals with implementation skills.

Note: We are actively working on the Claude Certified Architect – Foundation Practice tests and resources. So stay tuned.

Because in the end, the market rewards those who can build with it reliably.

Still have questions? Drop us an email at [email protected]. We will sort it out.

- DP-700 Master Content Guide Microsoft Fabric Explained for Data Engineers - May 28, 2026

- DP-700 Microsoft Fabric Data Engineer Career Roadmap - May 19, 2026

- AWS AI Practitioner vs Other AI Certs: Which to Choose in 2026 - May 6, 2026

- Why Executives Choose the Microsoft AB-731 AI Certification - April 24, 2026

- Claude Certified Architect: Agentic AI Era Begins - April 1, 2026

- Databricks vs AWS vs Azure: Best AI Career Platform 2026 - March 19, 2026

- How MLA-C01 Practice Tests Help You Master AWS SageMaker & MLOps? - March 17, 2026

- ML Engineer vs AI Engineer: Salary & Career Path - March 9, 2026